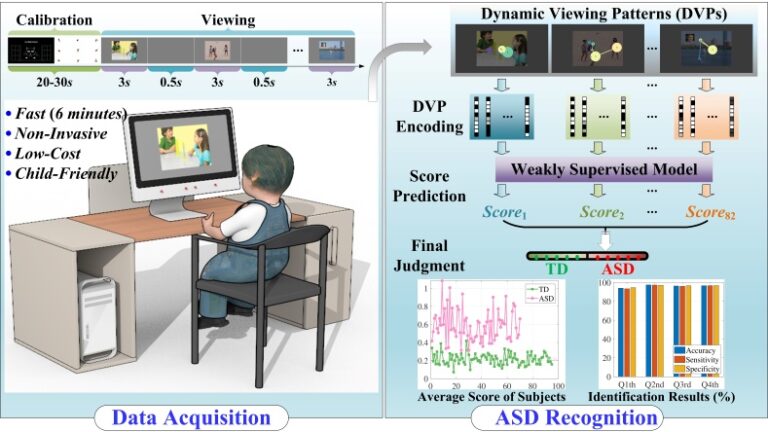

Autism spectrum disorder (ASD) affects nearly 1 in 44 children younger than 8 years old in the United States, and the situation may be even worse in remote areas. Studies have revealed that early detection and timely intervention play critical roles in improving the symptoms of ASD and reducing the harm caused by the disorder. However, it is challenging to utilize existing approaches to screen patients with ASD in remote areas due to the lack of professionals and high-tech instruments. To address this problem, we develop a fast and accurate scalable method for screening children with ASD.

We first illustrated the limitations of traditional hand-crafted features on accurate classification through the statistical calculations of the two groups of data under 15 features. In addition, we explored and validated the importance of using dynamic viewing patterns (DVPs) across time and stimuli on ASD classification. Finally, we proposed a deep weakly supervised model for ASD screening to learn from DVPs. In training, we utilized a long short-term memory network to learn the mapping between the autoencoder-based encoded dynamic patterns and the labels. In testing, we fed the encoded DVP of each undiagnosed child into the trained network and predicted the diagnosis category based on the score on all stimuli.

Based on the multi-center evaluation of 165 subjects aged 3-6 years from different areas of China, our method achieves an average recognition accuracy of 96.73% (sensitivity 96.85% and specificity 96.63%). The results show that the DVP-based model is an effective platform for enhancing auxiliary ASD screening accuracy. In conclusion, this is the first study demonstrating the importance and performance benefits of dynamic viewing information on ASD classification. Additionally, the proposed DVP-based ASD identification provides powerful support for future low-cost, non-invasive, intelligent ASD screening.