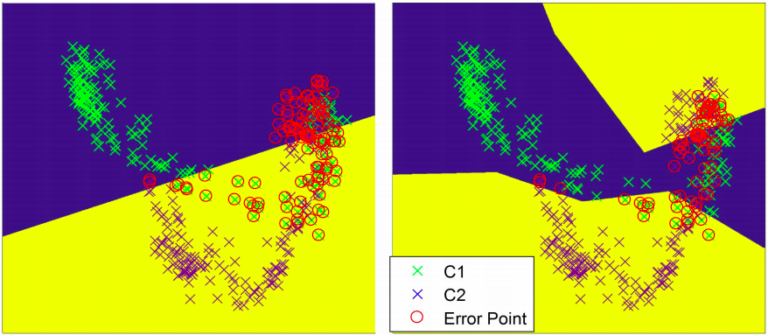

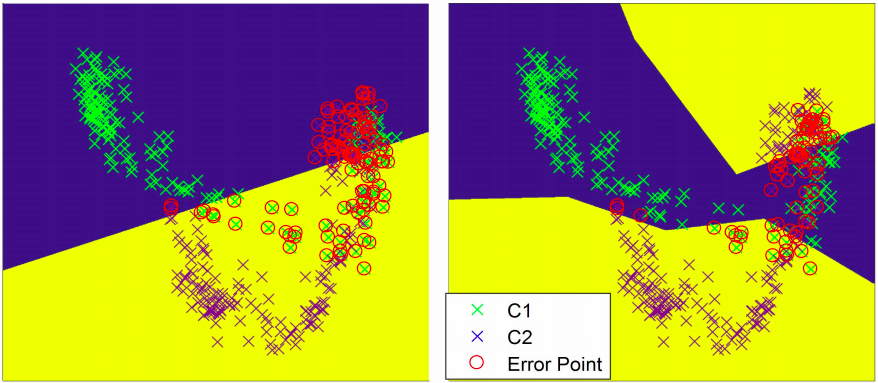

The ability to predict wrist and hand motions simultaneously is essential for natural controls of hand protheses. In this paper, we propose a novel method that includes subclass discriminant analysis (SDA) and principal component analysis for the simultaneous prediction of wrist rotation (pronation/supination) and finger gestures using wearable ultrasound. We tested the method on eight finger gestures with concurrent wrist rotations. Results showed that SDA was able to achieve accurate classification of both finger gestures and wrist rotations under dynamic wrist rotations. When grouping the wrist rotations into three subclasses, about 99.2 ± 1.2% of finger gestures and 92.8 ± 1.4% of wrist rotations can be accurately classified. Moreover, we found that the first principal component (PC1) of the selected ultrasound features was linear to the wrist rotation angle regardless of finger gestures. We further used PC1 in an online tracking task for continuous wrist control and demonstrated that a wrist tracking precision ( R2 ) of 0.954 ± 0.012 and a finger gesture classification accuracy of 96.5 ± 1.7% can be simultaneously achieved, with only two minutes of user training. Our proposed simultaneous wrist/hand control scheme is training-efficient and robust, paving the way for musculature-driven artificial hand control and rehabilitation treatment.

The ability to predict wrist and hand motions simultaneously is essential for natural controls of hand protheses. In this paper, we propose a novel method that includes subclass discriminant analysis (SDA) and principal component analysis for the simultaneous prediction of wrist rotation (pronation/supination) and finger gestures using wearable ultrasound. We tested the method on eight finger gestures with concurrent wrist rotations. Results showed that SDA was able to achieve accurate classification of both finger gestures and wrist rotations under dynamic wrist rotations. When grouping the wrist rotations into three subclasses, about 99.2 ± 1.2% of finger gestures and 92.8 ± 1.4% of wrist rotations can be accurately classified. Moreover, we found that the first principal component (PC1) of the selected ultrasound features was linear to the wrist rotation angle regardless of finger gestures. We further used PC1 in an online tracking task for continuous wrist control and demonstrated that a wrist tracking precision ( R2 ) of 0.954 ± 0.012 and a finger gesture classification accuracy of 96.5 ± 1.7% can be simultaneously achieved, with only two minutes of user training. Our proposed simultaneous wrist/hand control scheme is training-efficient and robust, paving the way for musculature-driven artificial hand control and rehabilitation treatment.