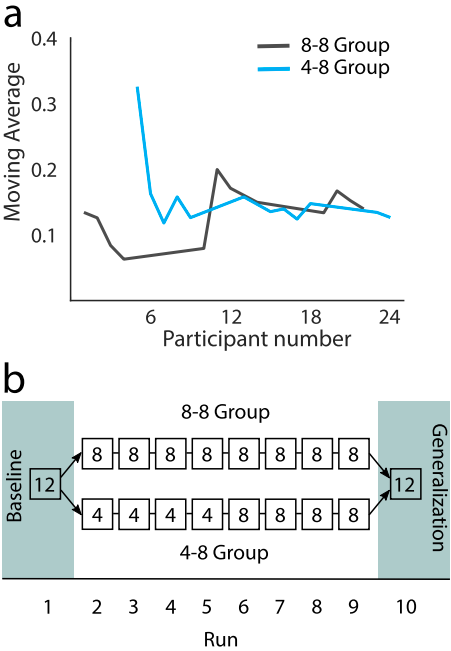

Motor learning-based methods offer an alternative paradigm to machine learning-based methods for controlling upper-limb prosthetics. Within this paradigm, the patterns of muscular activity used for control can differ from those which control biological limbs. Practice expedites the learning of these new, functional patterns of muscular activity. We envisage that these methods can result in enhanced control without increasing device complexity. However, key questions about training protocols, generalisation and scalability of motor learning-based methods have remained. In this work, we pursue three objectives: 1) to validate the motor learning-based abstract myoelectric control approach with people with upper-limb difference for the first time; 2) to test whether, after training, participants can generalize their learning to tasks of increased difficulty; and 3) to show that abstract myoelectric control scales with additional input signals, offering a larger control range. In three experiments, 25 limb-intact participants and 8 people with a limb difference (congenital and acquired) experienced a motor learning-based myoelectric controlled interface. We show that participants with upper-limb difference can learn to control the interface and that performance increases with experience. Across experiments, participant performance on easier lower target density tasks generalized to more difficult higher target density tasks. A proof-of-concept study demonstrates that learning-based control scales with additional myoelectric channels. Our results show that human motor learning-based approaches can enhance the number of distinct outputs from the musculature, thereby increasing the functionality of prosthetic hands and providing a viable alternative to machine learning.