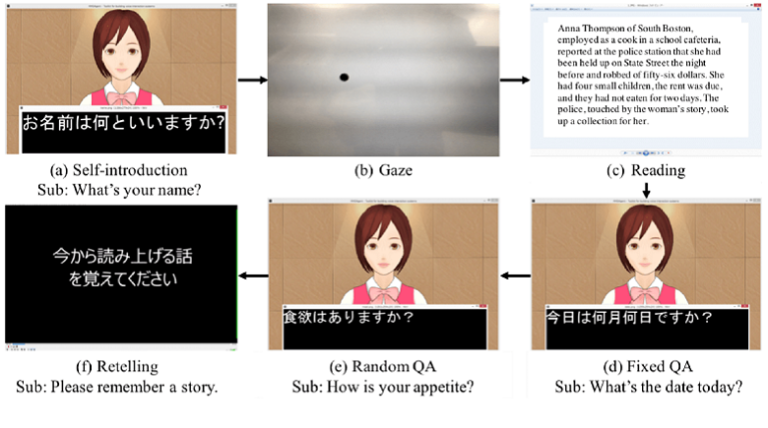

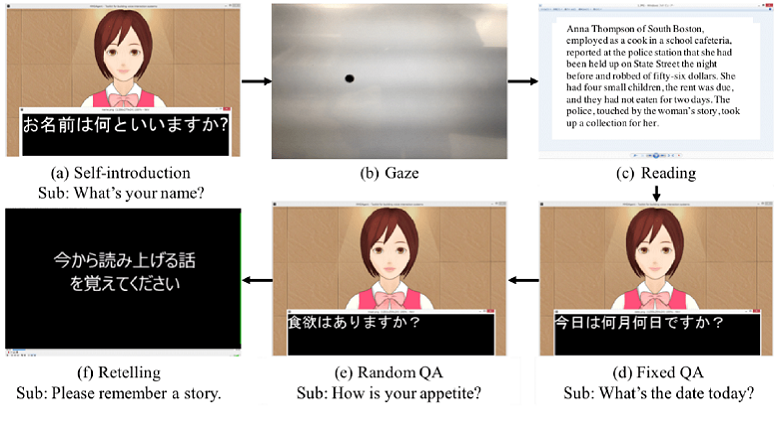

This paper proposes a new approach to automatically detect dementia. Even though some works have detected dementia from speech and language attributes, most have applied detection using picture descriptions, narratives, and cognitive tasks. In this paper, we propose a new computer avatar with spoken dialog functionalities that produces spoken queries based on the mini-mental state examination, the Wechsler memory scale-revised, and other related neuropsychological questions. We recorded the interactive data of spoken dialogues from 29 participants (14 dementia and 15 healthy controls) and extracted various audiovisual features. We tried to predict dementia using audiovisual features and two machine learning algorithms (support vector machines and logistic regression). Here, we show that the support vector machines outperformed logistic regression, and by using the extracted features they classified the participants into two groups with 0.93 detection performance, as measured by the areas under the receiver operating characteristic (ROC) curve. We also newly identified some contributing features, e.g., gap before speaking, variations of fundamental frequency, voice quality, and ratio of smiling. We concluded that our system has the potential to detect dementia through spoken dialog systems and that the system can assist health care workers. In addition, these findings could help medical personnel detect signs of dementia.