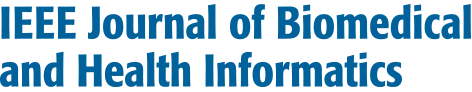

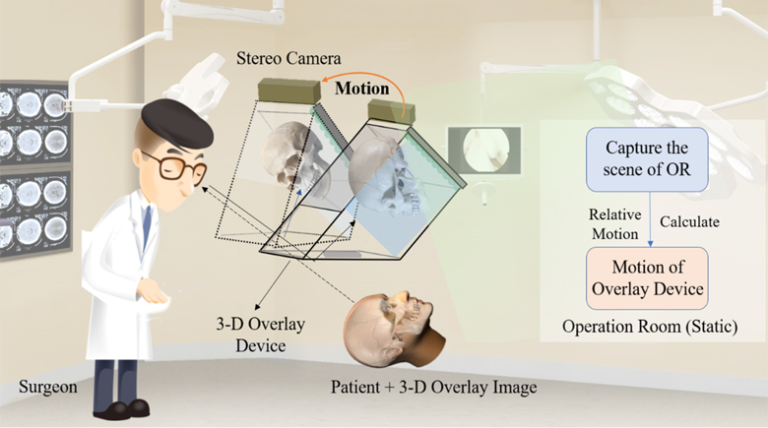

Augmented reality (AR) surgical navigation systems based on image overlay have been used in minimally invasive surgery (MIS). However, conventional systems still suffer from a limited viewing zone, a shortage of intuitive three-dimensional (3D) image guidance and can’t be moved freely. To fuse the 3D overlay image with the patient in situ, it is essential to track the overlay device while it is moving. A direct line-of-sight should be maintained between the optical markers and the tracker camera. We propose a moving-tolerant AR surgical navigation system using autostereoscopic image overlay, which can avoid the use of the optical tracking system during the intraoperative period. The system captures binocular image sequences of environmental change in the operation room to locate the overlay device, rather than tracking the overlay device directly. Therefore, it is no longer required to maintain a direct line-of-sight between the tracker and the tracked devices. The movable range of the system is also not limited by the scope of the tracker camera. Computer simulation experiments demonstrate the reliability of the proposed moving-tolerant AR surgical navigation system. We also fabricate a computer-generated integral photography (CGIP)-based 3D overlay AR system to validate the feasibility of the proposed moving-tolerant approach. Qualitative and quantitative experiments demonstrate that the proposed system can always fuse the 3D image with the patient, thus increasing the feasibility and reliability of traditional 3D overlay AR surgical navigation systems.